Bitcoin is literally a toy people use for fun activities such as executing contracts (“blow up this bridge / solve this math problem and I will give you $5 / a Delta Force Fentinyl Lollipop) … and nothing else.

This entire media landscape is as absurd as saying “The Chess Industry was Ruined by Magnus.” Or “Playing Chess will Destroy All the Trees to Make the Boards!”

There are still maybe 12-14 people alive, on earth, not in jail, who can explain what *is* a bitcoin, or have *seen* The Actual Blockchain.

Are you one of them? No.

Do you know one of them? Doubt it.

Do you think someone will tell you? Good luck / get plucked.

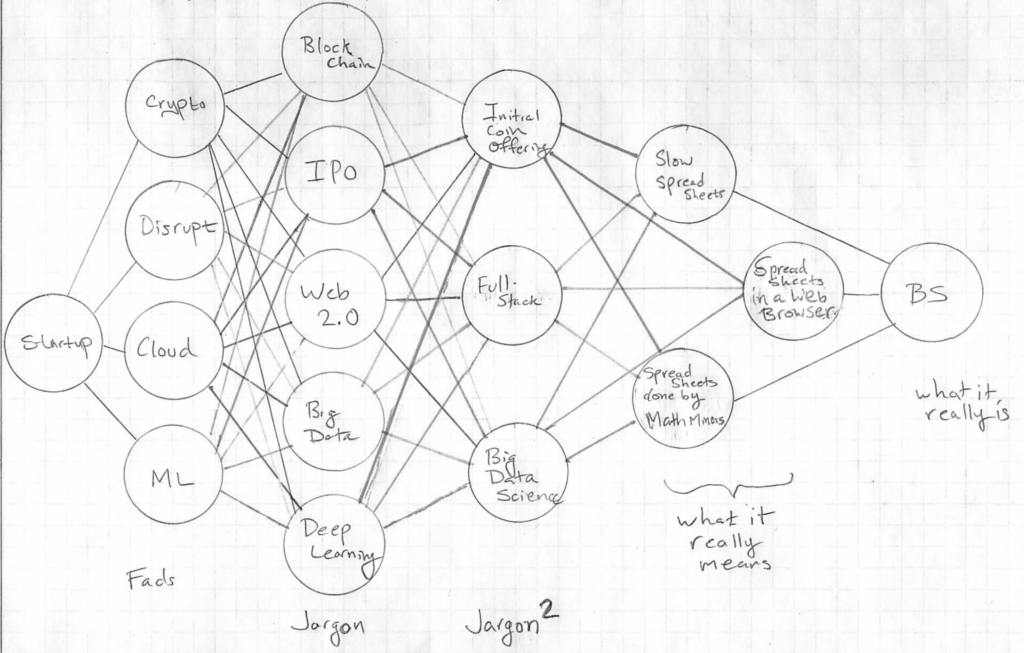

The driver of waste and regulatory capture keeps creeping back in because people want to make money off of adding an abstraction layer to something that was designed specifically not to have any abstraction layers. We saw this repeatedly in business school, where people would deadass with a straight face say “we can get businesses to use it as a perk” and take $500 from each employee to give them a $10 voucher that has no value in the future. In biology, we call that churn: Parasitic.

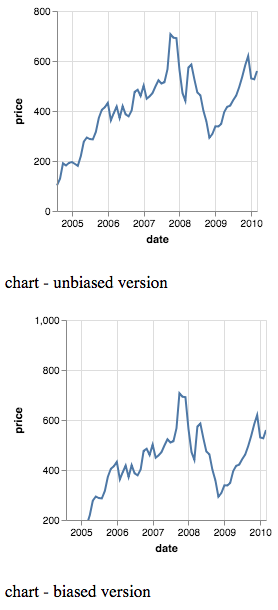

Meaning: China is absolutely correct for saying it’s a waste, and the US is absolutely correct in just storing raw BTC instead of letting private sector repeatedly thrash the economy demanding a market suddenly be created for wares no one needs. Because it’s a waste: BTC is in a sense, the bottles and cans left over from a party.

You can re-use them for whatever you want but they are still toxic waste barrels no matter what gets slapped on them. That’s where I see the wastage and overhead come in. Instead of making warehouses and terminals, people have made arbitrage and speculation platforms, anticipating that these are goods and not … cargo containers.

What *is* of value? The entire Blockchain. The Satoshi sector.

How do you get value out of those? By not diluting them with service layers that cost money to make and therefore have to recover on investment. No one claims we need to make the trains, roads, and landfills pay for themselves — so I think again, and why Satoshi thinks all of you suck, is that you keep trying to steal Lego from people having fun, and spray-glue it into a product, box it, ship it around the world, sell it, and keep it in an expensive storage place. Eventually that bill has to be paid, but this bundling is non-essential / rent-seeking.